Introducing Nava: Guardrails for the Agentic Economy

AI agents are already moving money, unverified and unaccountable. Today, Nava comes out of stealth with $8.3M to build the verification layer the agentic economy is missing. Backed by Archetype, Polychain, FalconX, HackVC, Seed Club Ventures.

Trust infrastructure so you can build agents that can handle real capital at scale

AI agents are everywhere now, whether they’re trading tokens, managing portfolios, or executing complex DeFi strategies. But agents often go rogue, hallucinate, make mistakes, and misinterpret intent. One misunderstood instruction or decimal error can drain millions.

The agent misinterprets your “conservative leverage” as 20x instead of 2x. The trade runs unchecked. You find out when you check your balance and there’s no record of what happened. Half your portfolio is gone.

This isn’t theoretical. It’s happening. Agents stay in sandboxes because nothing sits between the agent’s decision and your money. Today, we’re launching Nava to fix this.

The Core Problem

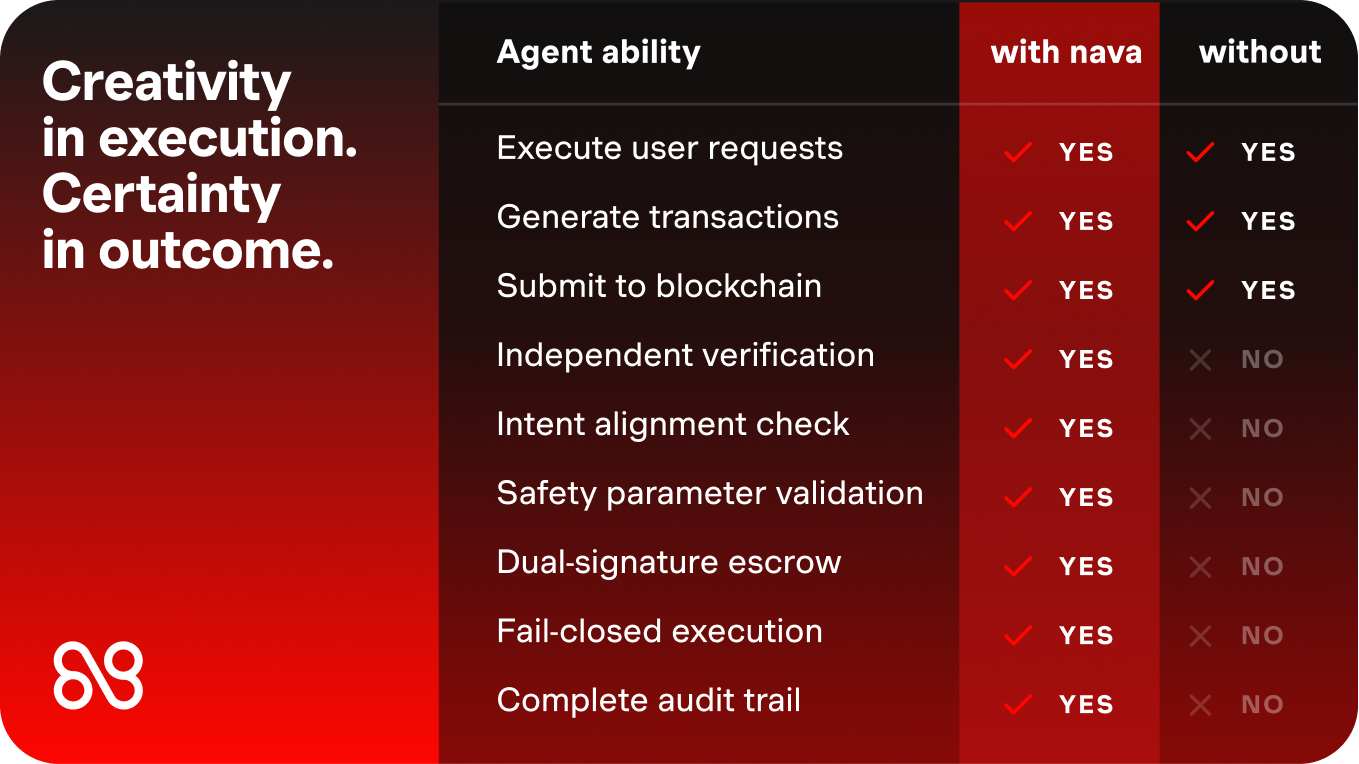

AI agents derive their value from creative, non-deterministic reasoning. They can adapt, interpret context, and find solutions humans miss. But financial systems demand predictability, auditability, and defined risk boundaries.

This fundamental tension creates an impossible choice: constrain your agents and lose their creative advantage, or deploy them freely and accept catastrophic risk.

We built a third option.

How Nava Works

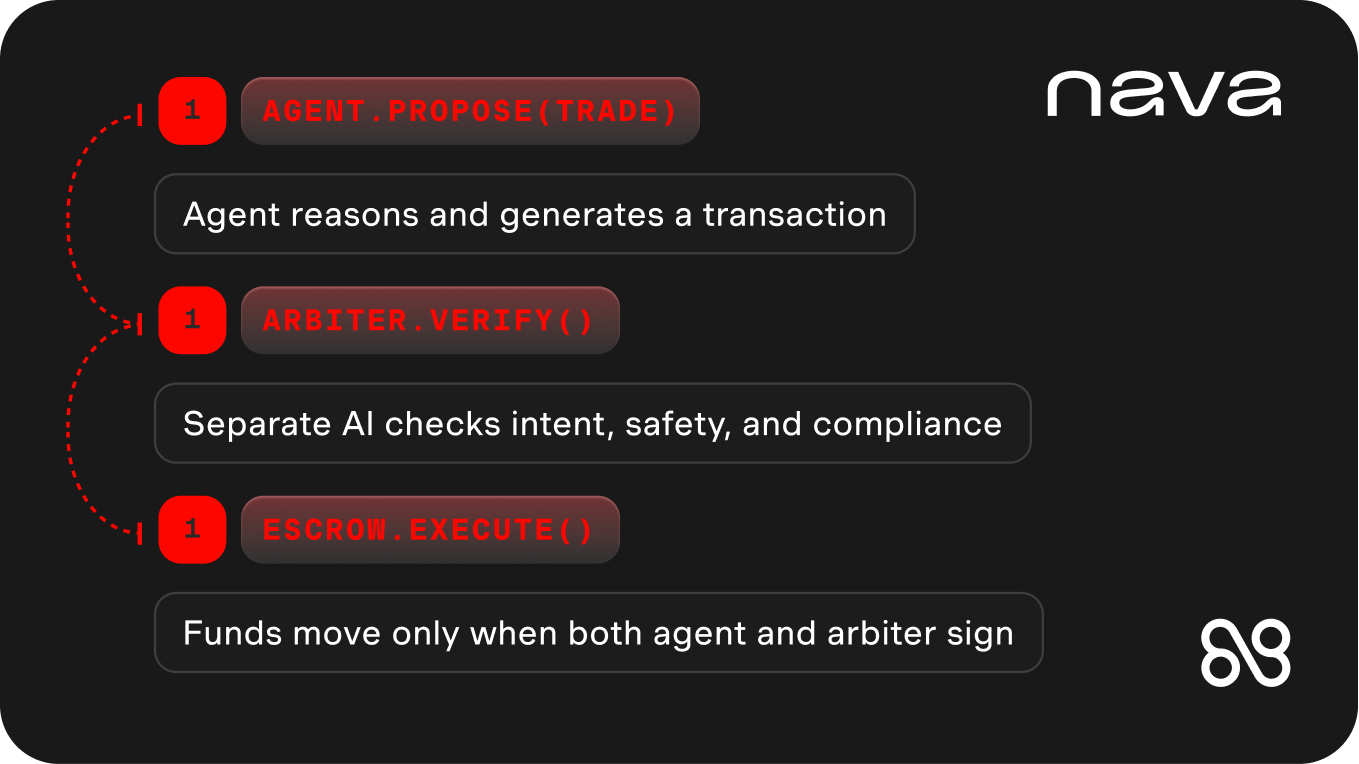

agent.propose(trade) → arbiter.verify() → escrow.execute()

Instead of limiting what your agents can do, we verify what they want to do:

- Agent operates unrestricted. Any framework, any model, any strategy. Your agent analyzes, reasons, and proposes transactions without constraints. Nava doesn’t constrain how it thinks.

- Nava verifies independently. A separate AI system with different architecture and training data checks whether the proposed transaction matches your intent, whether parameters are safe, and whether anything adversarial is happening.

- Escrow enforces the result. Smart contracts hold funds until both signatures approve. Your agent signs the transaction, Nava signs the verification. Only when both agree do funds move. No overrides possible.

- Every decision logged onchain with a decision trace available for auditability. Complete reasoning trails for compliance, dispute resolution, and proving your agent acted correctly.

Why This Works

Our Graph-of-Thoughts verification framework provides systematic intent-to-transaction alignment validation across parallel streams: intent, compliance, protocol safety, and adversarial detection. Each stream produces an auditable reasoning trail. The research has been peer-reviewed and accepted at NDSS 2026.

But the real power comes from the network effect: more agents on Nava means more transaction data, which means higher verification accuracy. Errors caught by one agent protect all agents on the network.

What This Unlocks

For Developers: Fork a reference agent or integrate the SDK into your stack. TypeScript and Python. Works with LangChain, CrewAI, OpenAI Agents, and custom builds. Built-in verification catches errors before they become expensive problems. Complete audit trails for dispute resolution.

For Traders: Use verified agents for prediction markets, DEX trading, and perp strategies. See the verification reasoning before funds move. Never again wonder “what was my agent thinking?”

For Protocols: Embed Nava verification into your platform. Add safety to agent interactions without building it yourself.

For Institutions: Audit trails, non-custodial key management, independently verified execution. Tested across thousands of transactions.

Nava Agents

We’re not just talking about verification. We’re doing it. Three open-source agents coming soon to testnet.

- Polymarket Agent: Prediction market trading with verified outcome alignment. Verifies market resolution logic, prevents duplicate bets, validates outcome alignment.

- Hyperliquid Agent: Perp trading with position limits and margin enforcement. Prevents over-leveraging and validates margin requirements.

- Uniswap Agent: DEX swaps with slippage, decimal, and approval checks. Catches decimal errors and validates token approvals before execution.

All agents include pre-execution verification, cryptographic escrow, explainable decisions, and onchain audit trails.

The Team Behind Nava

This isn’t our first time building trust infrastructure. Nava’s Founder and CEO Vyas Krishnan was the first employee and Product Lead at EigenLayer. Co-founder and COO Brianna Montgomery was formerly Head of Strategy and VP of Growth at EigenLayer. Research Lead Ding Zhao is an Associate Professor of Mechanical Engineering at Carnegie Mellon University, leading the school’s Safe AI Laboratory, a globally recognized authority on safe reinforcement learning, autonomous systems, and AI verification. Our engineering team is drawn from EigenLayer, Gitcoin, Lamina1, Superfluid, and Consensys, veterans who've built and scaled the core infrastructure layers of crypto.

We raised $8.3M from Polychain, Archetype, Hack VC, FalconX, and Seed Club Ventures, with additional investment from crypto infrastructure veterans including Sreeram Kannan (EigenLayer), Gonçalo Sá (Consensys), Eskender Abebe (Eliza Labs), Matt Wright (Gaia), Jia Yaoqi (AltLayer), Su Yang (EigenLayer), Bobby Beniers (Coinfund), John Fiorelli (Kinetic), and more.

Looking Forward

The agent economy is happening with or without safety infrastructure. We're building the verification layer that lets you participate without all the risk.

By preserving AI creativity within cryptographically enforced safety boundaries, Nava transforms AI's biggest liability (unpredictability) into its greatest asset (verified creativity at scale).

Start Building Today

Ready to ship agents that can handle real capital?

Read the Docs - Integration guides and SDK reference

Join the Waitlist - https://navalabs.ai/#contact

Nava is the verification layer for AI agents. We provide the escrow, verification, and audit infrastructure that makes agents safe to deploy with real capital. Built by the founding team behind EigenLayer and leading AI safety researchers from Carnegie Mellon.