Ethereum as AI's economic layer | What the stack still needs

As AI agents move from generating text to managing capital, the stack needs more than settlement rails. It needs verification. Nava builds the accountability layer that makes autonomous agents trustworthy enough to operate inside Ethereum's economic infrastructure.

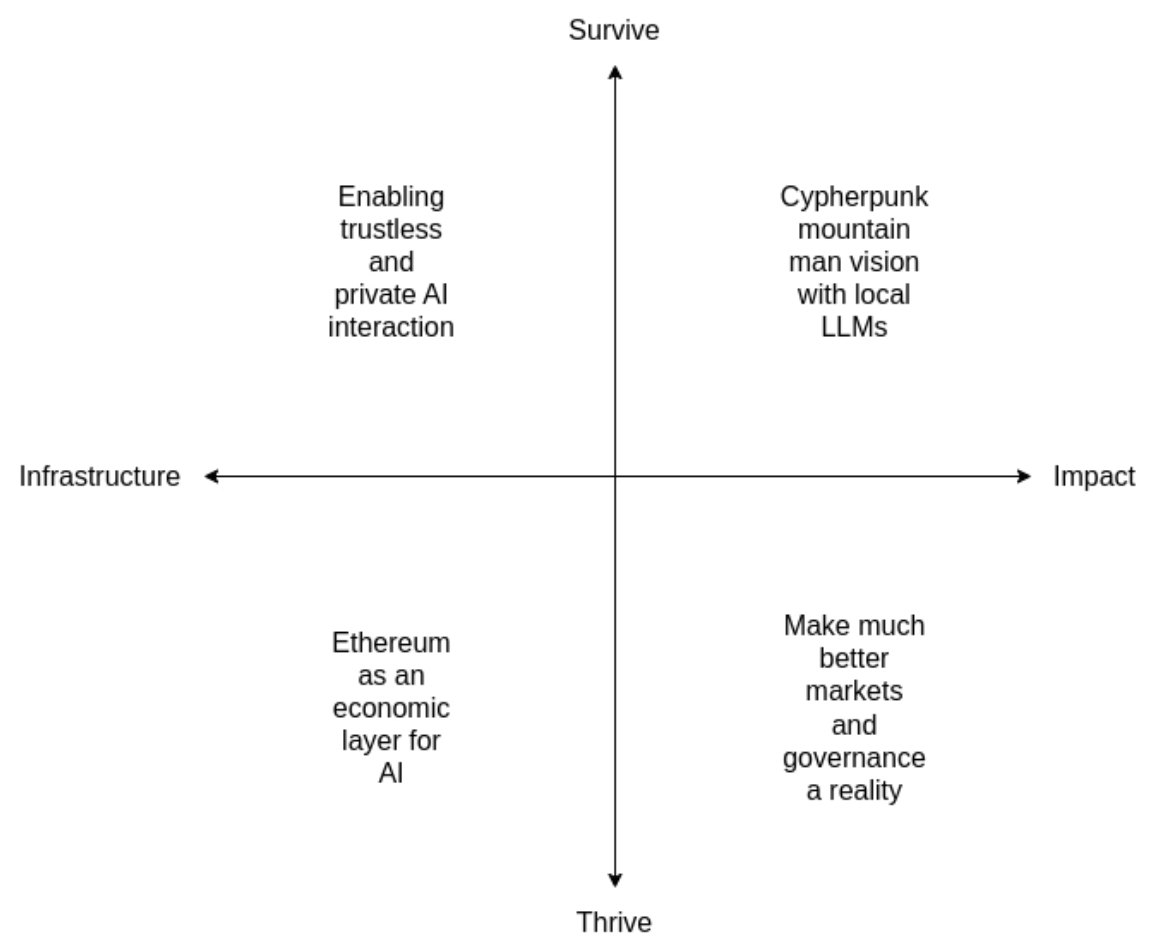

The intersection of AI and crypto has long promised synergies that have proven elusive in practice. Vitalik's recent post frames this challenge well: while the surface-level connections feel obvious — crypto's decentralization balancing AI's centralization, transparency countering opacity — the actual applications have remained disappointingly sparse. The core tension is rooted in crypto's open-source ethos conflicting with AI's vulnerability to adversarial attacks when exposed. However, Vitalik identifies a particularly promising category: "AI as a player in a game," where AI systems participate in mechanisms with human-derived incentives rather than replacing human judgment entirely.

This is a massive opportunity. AI agents are maturing from simple workflow tools into autonomous economic actors— i.e., systems capable of managing complex financial operations, not just executing pre-scripted transactions. Yet a fundamental barrier prevents their deployment at scale: the unpredictability that makes AI creative also makes it financially dangerous. One misinterpreted instruction or decimal error can drain treasuries worth millions. Traditional finance demands predictable, auditable outcomes — exactly what probabilistic AI systems cannot guarantee.

Nobody Owns the AI Layer

Every cycle, crypto produces a race to claim a "layer." DeFi summer had its settlement wars. The L2 era had its scalability wars. Now the prize everyone is reaching for is "the AI layer." Vitalik positions Ethereum as the economic layer for AI. Sreeram Kannan has been arguing for years that decentralization's real value proposition is verifiable AI. NEAR calls itself the AI-native L1. Story Protocol made an early bet on IP infrastructure for AI, an interesting thesis at a stage where the gap between narrative and revenue is still, for most players in this space, considerable.

The pattern is familiar. Claim a layer. Mint a token. Ship a narrative.

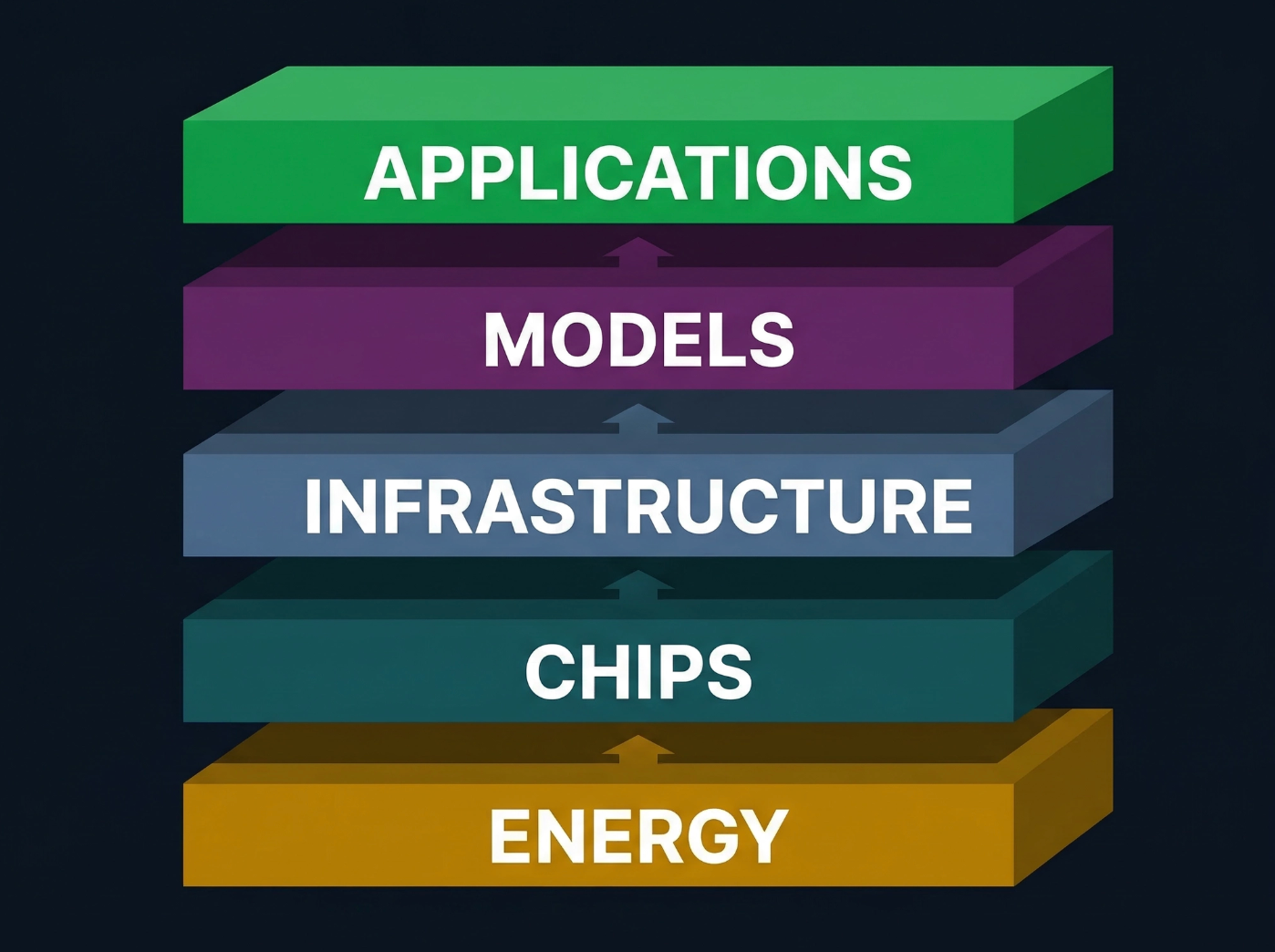

At Davos this year, Jensen Huang offered a more useful framework: what he calls the five-layer AI stack — Energy → Chips → Infrastructure → Models → Applications.

Every successful AI application pulls on every layer beneath it, all the way down to the power plant keeping it alive. This is not a set of interchangeable protocol choices. It is a physical supply chain. Intelligence is generated in real time, and the entire computing stack beneath it had to be reinvented to support that.

When Vitalik argues that Ethereum should be the economic layer for AI, he is making a claim about the infrastructure layer of this stack. Ethereum, as a decentralized network running a common virtual machine, offers a service there — settlement, programmable escrow, dispute resolution. But it is not clear that Ethereum's core property, decentralized consensus, has inherent demand from the AI stack unless it is applied in a way that solves a specific problem AI workloads actually face. Decentralization for its own sake does not appear in Jensen's framework. The demand has to be earned, not assumed.

The honest framing: decentralized networks can participate in the AI stack, but only if they solve problems the stack actually has.

The Missing Piece: Accountability

Jensen's framework describes how intelligence is produced. It does not describe how intelligence is held accountable.

As AI agents move from generating text to managing capital, signing contracts, and executing trades with real consequences, the question shifts from "can the model do this?" to "did the model do what it was supposed to do?" An AI agent executing a $50,000 trade does not need a faster chip. It needs something to verify that the trade it executed actually matches the intent of the human who authorized it. The gap between intent and execution is where catastrophic failures will originate, not from models being too slow or too expensive, but from models being unaccountable.

This is where the properties of decentralized systems become genuinely useful, because verification is a problem that benefits from separating the entity that executes a transaction from the entity that verifies it was correct.

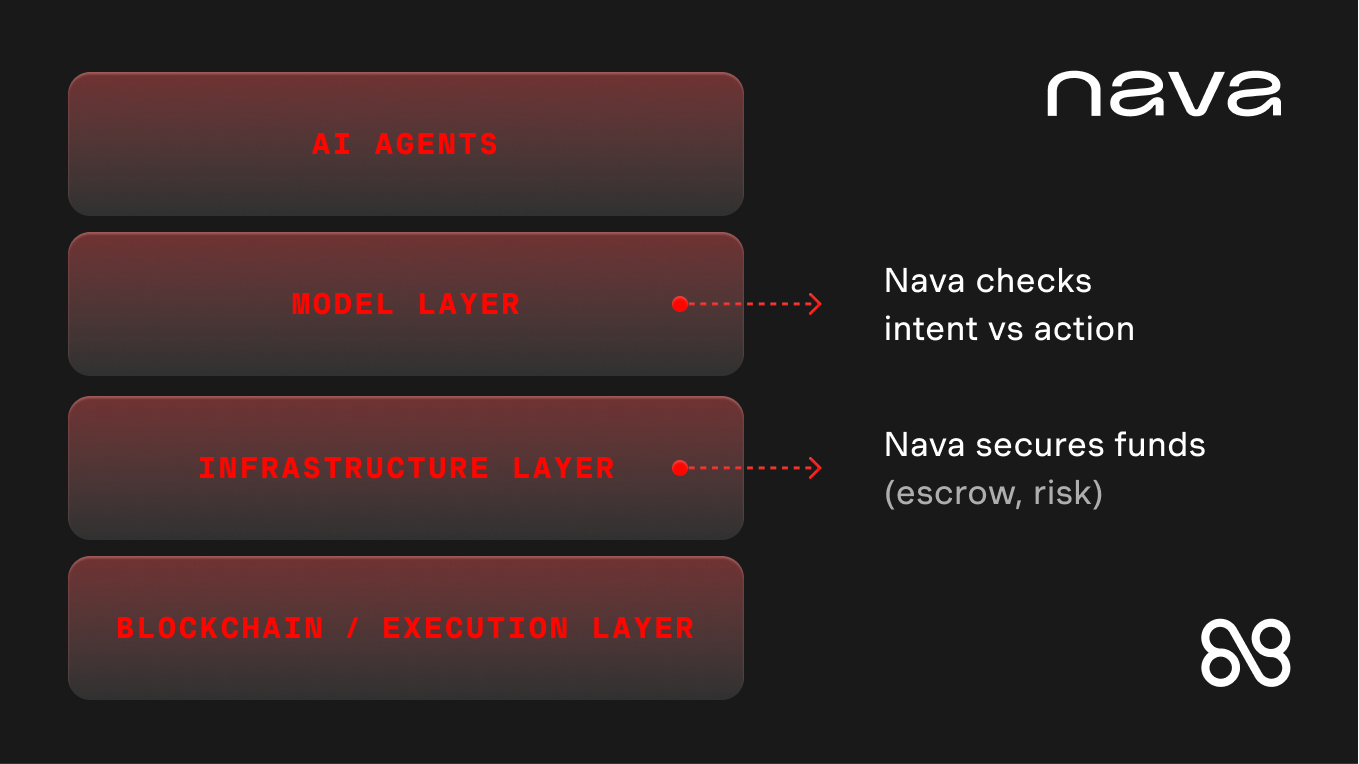

Nava's Position in the Stack

Nava is building the guardrails that preserve AI creativity while providing cryptographic guarantees of safety. Our approach aligns with Vitalik's vision of AI-as-player rather than AI-as-rule: instead of having AI systems directly control protocols, we enable them to participate in a verification process where independent systems validate each other's decisions. Your agent proposes a transaction, our independent Arbiter verifies it meets your intent and safety parameters, and escrow contracts enforce the result. This dual-agent architecture creates the accountability layer that developers and institutions need while preserving the creative problem-solving that makes AI valuable.

Nava does not sit outside the AI stack. We operate inside it, at two layers simultaneously.

At the infrastructure layer, we build the financial plumbing AI agents need to operate safely: escrow services that ensure user funds are not lost when agents transact autonomously, and the chargeback and insurance mechanisms for assessing risk in agentic transactions. This is infrastructure that did not need to exist until agents started handling real money.

At the model layer, we build something without clean precedent: a verification model. A model whose purpose is to sit alongside every other model in the stack and answer one question: did this agent's action match its human's intent? The verification model outputs structured validation graphs that trace the reasoning path from intent to execution, decomposing a transaction into its constituent claims and checking each against protocol-specific constraints, semantic alignment, and adversarial safety conditions.

This produces two effects. First, accountability: every agent action can be verified against a structured trace. If something goes wrong, you can identify exactly where the divergence occurred, in real time, before the transaction settles.

Second, and this is the part most people miss: it reduces compute over time. The validation graphs produce traces that enable early exit from redundant verification paths. As the system builds a richer understanding of which paths matter for which transaction types, it short-circuits the ones that do not. Verification becomes cheaper and faster the more it runs. Nava is not just a security cost. It is a compute efficiency gain that compounds.

Looking Forward

Vitalik's framework positions Ethereum as the economic layer for AI-related interactions. By building on Ethereum's infrastructure, we enable AI agents to participate in the broader crypto economy while maintaining the verification and accountability mechanisms that make such participation safe. Our verification layer does not just prevent errors, it creates the trust foundation that allows AI agents to become legitimate economic actors, handling real capital with the same reliability expected of traditional financial infrastructure.

We see this evolving toward what Vitalik describes as "micro-scale mechanisms," where verification becomes so efficient and granular that every AI decision can be validated in real time. As blockchain scaling continues and cryptographic techniques advance, verified AI agents will handle routine financial operations at the trust level currently reserved for institutional systems.

The conversation in crypto right now — who owns the AI layer, which chain is the AI chain — is the wrong conversation. There is no single AI layer. There is a five-layer industrial stack being built at a scale that dwarfs anything crypto has produced. The question for any project in this space is not which layer to claim, but which layer you actually serve and what problem you solve there that the stack cannot solve without you.

Nava's answer is specific. AI agents are going to manage real capital and make consequential decisions. The infrastructure to keep them accountable does not exist yet. The model to verify that what an agent did matches what a human intended is a new category entirely. We are not building the AI chain. We are building the verification layer: the part of the stack that makes autonomous agents trustworthy enough to deploy.